Yuliia Kaveshnikova/iStock

The way we think about our process will shape the way we collect, analyze, and interpret our data when things go wrong. This in turn will shape the actions taken and the results obtained. In this column, we look at an example of the difference between the traditional approach and an alternate approach to thinking about your processes.

|

ADVERTISEMENT |

An example

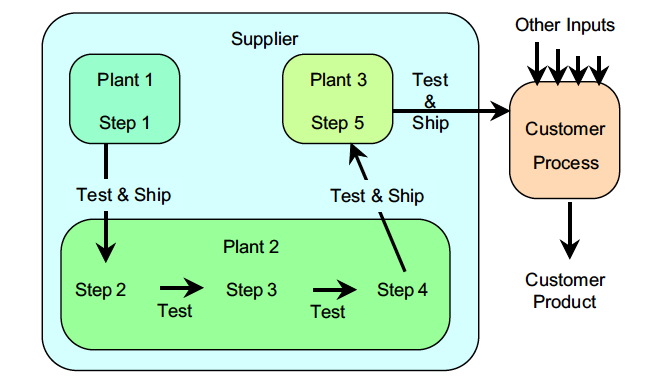

Product 26 is a specialty chemical, produced by one supplier and used by one customer. The production of this chemical involves five different steps. Step 1 is the production of base fluid. The base fluid is shipped by truck to Plant 2 for steps 2, 3, and 4. The resulting intermediate product is then shipped to Plant 3 for Step 5. At the end of Step 5, Product 26 is placed in barrels and shipped to the customer. After each step, properties specific to that step are tested before the material is moved to the next step. Because the three plants are in different states, a batch of Product 26 takes at least three days to produce, and initially they were producing five batches per month.

Figure 1: Product 26 production process

…

Comments

Amen!

Unfortunately, most improvement projects fail to implement the "C" in DMAIC. No control charts, no corrective actions, and then teams wonder why the improvement falls back to previous levels after a period of time.

Stop admiring the problem.

Using modern SPC software, it's easy to monitor performance. It just takes a little more rigor and delivers amazing results.

Six Sigma

Why are you using a specification based approach - Sick Sigma, and DMAIC, rather than Professor Deming's PDSA? Six Sigma's creator (a school teacher turned self-confessed con man) never mentioned control charts, at the heart of modern quality. Counting Six Sigma's 3.4 dpmo is pure farce.

91 % of Six Sigma companies have failed (Qualpro survey). Claimed “successes” of the Scam include a long term study of hundreds of Six Sigma projects at Ford, which showed an average of 1 in 5 parts defective AFTER improvement for “successful” Six Sigma projects.

Unmatched Simplicity of Style and Clarity of Content

Dear Dr. Wheeler,

I never cease to admire your writing style — you explain effective methods for solving production process problems in simple language, problems that arise from ignoring the voice of the process as shown on Shewhart control charts (process behavior charts).

I hope that someday I can come close to your style.

Simple but effective

Yet another great example from Dr. Wheeler on how the plotting of the data in context can yield an outcome that is otherwise missed. Thanks!

Add new comment