Setting the process aim is a key element in the short production runs that characterize the lean production of multiple products. Last month in part one we looked at how to use a target-centered XmR chart to reliably set the aim. This column will describe aim-setting plans that use the average of multiple measurements.

|

ADVERTISEMENT |

The necessity of process predictability

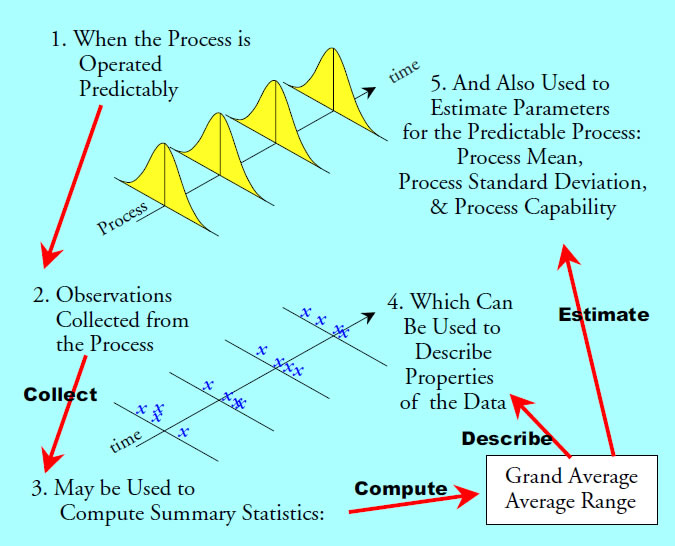

All effective aim-setting procedures will be built upon the notion of a process standard deviation. Some estimate of this process dispersion parameter will be used in determining the decision rules for adjusting or not adjusting the process aim. When a process is operated predictably this idea of a single dispersion parameter makes sense.

Figure 1: When statistics serve as estimates

…

Add new comment