Don’t tell me you’re not tempted to look when you spot a magazine cover saying, “How does your state rank in [trendy topic du jour]?” Many of these alleged analyses rank groups on several factors, then compare the groups’ sum totals of their respective ranks to make conclusions.

|

ADVERTISEMENT |

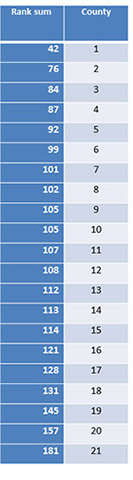

For example, in 2006, I was at a presentation by someone considered a world leader in quality (WLQ) who has been singing W. Edwards Deming’s praises since the late 1980s. He presented the following data as a bar graph, from lowest score to highest.

|

|

It is the sum of rankings for 10 aspects of 21 counties in a small country’s healthcare system (considered to be on the cutting edge of quality). Lower sums are better: minimum = 10, maximum = 210, average = 10 x 11 = 110.

My antennae went up. A bar graph? With absolutely no context of variation for interpretation? And a literal interpretation of the rankings?

What’s wrong with this picture?

…

Comments

Sad "state of affairs"

Your article on Understanding Variation included the following sentance: "I once analyzed a similar state ranking. There were two states truly above average, two below average, and 48 states were indistinguishable."

Unless you are including some of the US Territories, having two states above average and two below average, wouldn't there be "46" states being indestinguishable.

I never comment on statistical evaluations, but I guess there's a first tme for everything. Since I was born in 1948, I can recall a time when 48 states would be indistinguishable....

Hey -- I'm a statistician...

...I deal in variation. Plus or minus two isn't so bad.

Thanks for noticing my brain flatulence -- it should be 46.

Meaning

Numbers don't mean much without an understanding of what they mean. Exactly what is being "ranked" here?

What was being ranked?

Your guess is as good as mine -- the speaker never said. But, I'm sure they were VERY important things :-)

And that's another point I could make -- how do they actually define the things they rank? It's all so "vague" -- and most analyses I've done like this exhibit the inevitable resulting wide variatiion.

But people do love those rankings!

Excelent

Saludos desde México

Add new comment