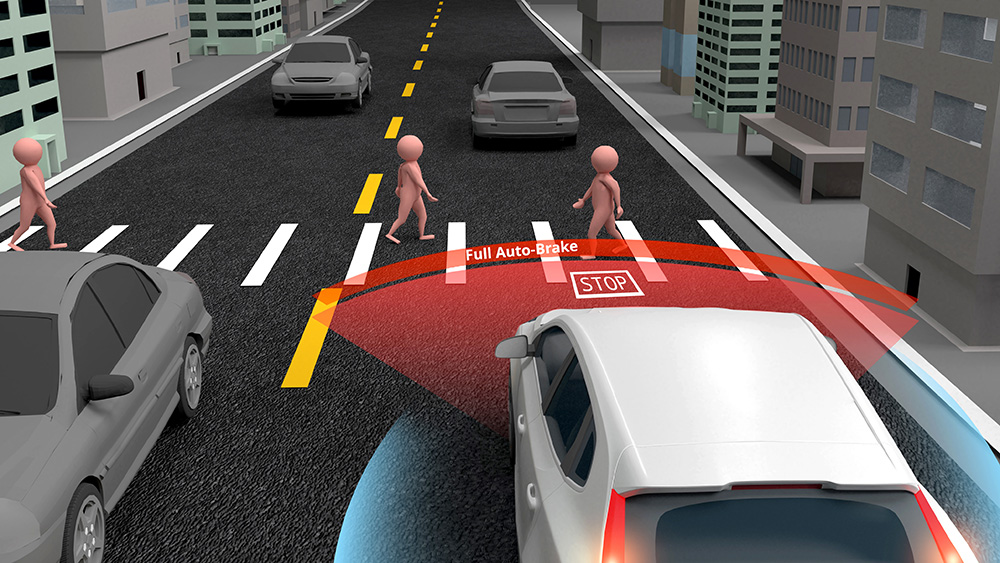

Nobody would get into a self-driving car simply because the door locks worked and the alarm system was functioning properly. Those security features protect the car from being stolen or tampered with, but they say nothing about whether the car’s AI will stop in time when a child runs into the road, or know when it’s safe to proceed at a four-way stop.

|

ADVERTISEMENT |

Data security alone won’t cut it

The same principles apply to AI systems in life sciences. Data security ensures your information is protected, your access is controlled, and your systems are hardened against attack. It’s critical and foundational, but it only protects the information flowing through your AI.

Your security posture says nothing about the decisions your AI is making with that information. The hard truth is that a perfectly secured system can still produce an unsafe outcome when decisions are made with AI. The gap between security and AI governance is what ISO 42001 was designed to fill.

Great security doesn’t mean safe AI

Since its creation, ISO 27001 has been one of the industry’s gold standards for information security management. It answers critical questions about data security: Are access controls in place? Is encryption working? Are vulnerabilities being identified and patched? These are the locks, the alarm system, and the tamper alerts on the self-driving car. They are nonnegotiable and foundational. But ISO 27001 was never designed to answer the harder question: Is the AI behind the wheel actually making good decisions?

ISO 42001 adds an entirely new layer of requirements and builds on that foundation. If ISO 27001 secures the car from outside threats, ISO 42001 governs the intelligence doing the driving. It covers the collision detection logic, the lane-keeping behavior, the decision-making hierarchy when the car faces a split-second choice, and the accountability structure for when something goes wrong. ISO 27001 keeps bad actors out of the car. ISO 42001 ensures the car itself doesn’t become the bad actor.

For AI systems in life sciences, that second layer means establishing governance around three questions that no security posture can answer: Who owns the AI’s decisions? What outputs are acceptable? And what happens when the system gets it wrong?

These aren’t abstract technical concerns. When AI is drafting regulatory submissions, generating training materials, or translating technical documentation throughout global markets, ungoverned decision-making isn’t just a compliance risk. It’s a patient safety risk. Securing your infrastructure is necessary but insufficient. You also need transparent, auditable control over what your AI does with those data once it has access.

The end of ‘trust us’

Here’s a fact that surprises most people: Medical device manufacturers in the United States weren’t required to prove that their products were safe or actually worked until 1976. Before that, a company could claim a device could cure brain cancer or prevent a heart attack without ever demonstrating it was effective or safe.

AI in regulated industries has been living in a strikingly similar era. Companies pointed to their proprietary code and in-house algorithms, saying, in effect, “trust us.” For a while, that was enough. The technology was so new and complex that even regulators struggled to understand what was happening under the hood.

Those days are over. Regulators and industry leaders now understand exactly what these systems do and what risks they carry: bias baked into training data, hallucinations in outputs, and automation bias where humans stop questioning what the AI recommends.

If I need a pacemaker, I want documented proof that it’s safe and effective before it ever goes into my body. The same standard should apply to any AI system making decisions that affect patient safety, product quality, or regulatory standing. The “trust us” mentality isn’t acceptable in regulated industries, and it shouldn’t be for AI, either.

Human in the loop: Where AI stops and humans begin

Think of AI in life sciences as an exceptionally talented intern on their first rotation through your regulatory affairs department. They can research predicate devices, help structure a technical file for CE marking, draft sections of a design history file, or compile adverse event data for an FDA MedWatch report—genuinely useful work that saves real time. But no responsible quality director would let that intern make a final call on a corrective action, approve a change control, or submit a premarket approval (PMA) to the FDA without experienced eyes reviewing every word first. In regulated industries, the intern does the heavy lifting. The qualified human makes the call.

That’s the principle behind human-in-the-loop design, and it sits at the core of what ISO 42001 requires. Human review isn’t a checkbox. It’s a documented, verifiable part of the process. In a properly governed AI system, the audit trail is not bureaucracy. It’s accountability. AI can’t and shouldn’t replace human oversight and judgment.

No self-driving car is built without a fail-safe. The moment conditions exceed what the system was designed to handle, the car is programmed to stop, alert, and wait for human intervention. ISO 42001 builds that same logic into your AI governance. There are decisions the system simply can’t finalize on its own. That isn’t a limitation; it’s the design.

If you can’t audit it, you can’t trust it

One of the biggest challenges in AI governance is making highly technical controls understandable and verifiable to the people who need to trust them. This is where concepts like retrieval augmented generation (RAG) and knowledge graphs move from technical jargon to genuine governance tools.

Think of RAG and knowledge graphs as the navigation system in the self-driving car. The car doesn’t guess at directions or invent roads that don’t exist. It draws from verified, authoritative maps. RAG works the same way. When the AI generates a response, it pulls from domain-specific, validated content, rather than fabricating answers from thin air. Under ISO 42001, these are documented and auditable controls, not just engineering choices made in the background.

In a regulated industry, that distinction isn’t academic. It’s the difference between a submission that passes and one that doesn’t. Google Translate can convert words from one language to another, but it doesn’t understand medical device or pharmaceutical regulatory nuances. An AI system built specifically for life sciences ensures that technical terms carry the correct regulatory meaning across markets, not just a word-for-word translation that could create compliance risk.

Under ISO 42001, RAG and knowledge graphs become formal controls with documented validation evidence behind them. Auditors don’t have to take our word for it. They can verify it the same way an inspector verifies that a manufacturing process follows a validated protocol. Each customer receives isolated resources, including dedicated storage and compute instances, which ensures strict data segregation at every layer. That level of ownership is what makes end-to-end accountability possible—and end-to-end accountability is exactly what ISO 42001 demands.

Creating accountability that matters

In regulated industries, accountability is straightforward in principle: Trace every decision back to a specific person with the authority and oversight to have made it. AI complicates this. When a system makes a recommendation, and a human acts on it without pausing to question the logic, check the sources, and challenge the conclusion, responsibility becomes murky at best. In an industry where the FDA expects a named, qualified human being to own every critical decision, that ambiguity shows up in a failed audit, a recalled product, or a patient safety event with no clear owner.

In a self-driving car, if the vehicle runs a red light, the security system isn’t on trial—the decision-making logic is. Who built the AI logic that made that call? Who validated it before it went live? And who was responsible for catching it when it started to go wrong? ISO 42001 exists so those questions are never asked for the first time during an investigation. It demands clear, documented answers before anything ever goes live.

The framework requires documented evidence that human oversight is active and meaningful, not performative. Reviewers must engage with outputs, modify them when necessary, and explicitly approve them before the next step proceeds. That chain of documented decisions is what makes accountability real rather than theoretical.

It also requires performance monitoring across metrics like truthfulness, relevance, and bias, with regular measurement of drift and degradation over time. The self-driving car doesn’t just need to pass a test on Day One. It needs to perform reliably every single day it’s on the road.

Knowing who is responsible in a crisis only works if those roles were established long before the crisis arrived. The provider ensures that the AI performs as designed. The customer retains full responsibility for reviewing outputs, applying domain expertise, and making every final decision. Clear lanes of ownership; no ambiguity about who answers when something goes wrong.

From ‘trust us’ to ‘prove it’

Nobody wants to climb into a self-driving car and spend the ride white-knuckled and terrified, wondering whether the AI has actually been tested, validated, and proven safe. You want to know that every system was designed with intention, that every failure mode was anticipated, and that someone with real authority signed off before you were ever asked to trust it. The same expectation is arriving for AI in life sciences, and it’s arriving faster than many organizations are prepared for.

ISO 42001 isn’t a bureaucratic hurdle. It’s the framework that makes AI trustworthy in the same way that rigorous safety engineering makes a self-driving car trustworthy. It treats governance as a continuous process rather than a one-time validation event, which is exactly how life sciences companies already think about quality and safety management.

Life sciences has never accepted “we think it works” as an answer. Every drug, every device, every process has to be proven safe, documented thoroughly, and validated before it ever reaches a patient. AI is no different.

“Trust us” has never been good enough. With AI, it’s time to prove it.

Comments

Data Security Alone Won’t Cut It for AI

How do QA professionals obtain practical training on ISO 42001, to be able to influence the design and marketing functions?

Add new comment