|

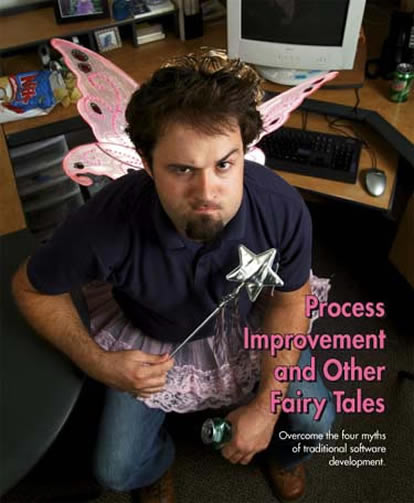

Process Improvement and Other Fairy Tales

by David Elfanbaum

If at first you don't succeed, try, try, again. Then quit.

There's no use being a damn fool about it.

--W.C. Fields

Ever since engineers first exchanged their slide rules for punch cards, information technology professionals have been working to improve the quality of software development. Despite more than a half century of Herculean effort and the almost complete disappearance of pocket protectors, recent studies report that fewer than one in three projects meet requirements and are completed on time and within budget. Is software development like baseball, where a .300 batting average is the best we can hope for, or are our efforts somehow off base?

The reason that such concerted effort hasn't produced better results is because the traditional development methodology everyone keeps trying to improve is inherently flawed. It's based on false assumptions that can accurately be described as fairy tales or myths. As long as we keep riding the same lame unicorn, we won't run any faster, let alone fly.

Fortunately, about 10 years ago, a handful of software developers independently began to create new methods based on a more accurate grasp of reality. These processes were formalized as a new software development methodology called "agile." Although most agile uses relate to software development, the underlying principles can be applied to quality improvement programs in any industry.

We've come a long way since the first computer bug was detected and removed in 1945. For the record, it was a moth stuck between the physical relays on a Harvard Mark II computer. Since then, efforts to improve software development have moved forward in three waves, each focusing on a particular aspect of the development process.

The first wave focused on the evolution of software language. Machine language was the first programming language. It consisted of the zeros and ones on which computers directly operate. Machine language required programmers to explicitly code every discrete action a computer would take, so using it was time- consuming and vulnerable to error.

During the ensuing 50 years, software language evolved to abstract the binary code of machine language into human words and syntax. Today, modern languages such as Java can create the equivalent of thousands of lines of machine language with just a few lines of code from a programmer. This transformation not only spared countless developers from the agony of carpal tunnel syndrome, but also supported the rapid creation of very complex software.

As technology advanced and software projects increased in complexity, it was necessary to develop repeatable and reliable methods to manage the process of software development and increase the likelihood of project success. Locking programmers in a room with a few cases of Mountain Dew, a family-size bag of Ruffles potato chips and a stack of comic books just didn't cut it anymore. Just as quality in manufacturing moved from mere inspection to statistical process control, the second wave of software focused on standardizing a set of practices and processes that form a quantifiable methodology for software development. "Waterfall" was one of the first formalized methodologies and is the industry standard today.

The Waterfall methodology segments the development processes into sequential phases that flow from top to bottom, like its namesake. These include:

• Gathering requirements

• Design

• Coding

• Integration

• Testing

• Installation

• Maintenance

Two key characteristics of Waterfall are that each phase must be completed before the next commences, and once a phase is completed, it's locked with no further changes accepted. Changes are strongly resisted because their cost accelerates dramatically as a project moves along its life cycle. Because each phase rests upon the one preceding it, the later changes are made, the more they ripple through the earlier phases. Even relatively small changes late in the project can produce unexpected effects that can take the project back to square one.

Despite the advance of technology and the implementation of standardized methodologies, the performance of software projects continued to be unacceptable. Most projects failed to one degree or another. Industry's conclusion was that organizations just weren't consistently following the phase-based methodologies closely enough. The third wave commenced in the 1990s and continues today. It focuses on consistently implementing and optimizing an organization's software development methodology. The leading process improvement system is the capability maturity model integration (CMMI), whose ultimate goal is to help organizations maintain continuous improvement in productivity and keep quality under objective control.

After more than 50 years of technological enhancements and a decade of process improvement, one might expect the industry to have a foolproof system in place to produce successful software projects. However, according to the tenth edition of the Standish Group's CHAOS Report, the disturbing fact is that fewer than one in three projects are completed successfully, and only a marginal improvement in the rate of project success has occurred since 1996. (See figure 1 below)

Why hasn't all the time, effort and money spent on process improvement delivered better results? The underlying problem is that the processes everyone is trying to optimize are inherently flawed because they're based on four erroneous underlying assumptions--what I call "the four myths." By the way, you can easily apply these four myths to any product, not just software.

• The myth of omniscience assumes that by the end of the requirements phase, the customer has gained and communicated complete knowledge of every single requirement that must be fulfilled by the completed software. It also assumes that developers can translate that knowledge into a design document providing the detailed blueprint to follow throughout the entire project.

Customers don't test software until the final phases. They only realize that they've missed key requirements as they evaluate the software. Any necessary corrections are very expensive at that point in a project. The net result is either software that doesn't fully deliver the business solution that's required, or a project that's plagued by significant time and cost overruns.

• The myth of frozen time assumes that no unforeseen changes in the business or technical environment will occur during the life of a project. A Waterfall project uses the same strategy an ostrich does when it puts its head in the ground and pretends nothing scary is happening in the world around it. Unfortunately, constant change is the most predictable aspect of the modern world. Mergers, new business partnerships, technical advances and new regulations can all affect a project. The net result is software that may meet the business requirements that existed at the time a project started but doesn't meet current needs.

• The myth of written clarity assumes that the customer and developer can transcribe their omniscient knowledge and oracle-like precognition in complete and unambiguous requirements documents. Traditional methodology includes no systematic means for ongoing communication between the customer and the developers after the initial phases. When communication is required, it's often through one or more intermediaries rather than directly between the people working on the code and those who will utilize the completed software. Like the child's game of telephone, the more people and documents between the programmer and the customer, the more garbled the information will be. The net result is software that delivers what the developer thought the customer wanted but misses the mark on what the customer actually requires.

• The myth of contractual security assumes that documenting every aspect of a project ensures that developers will deliver optimal software. The customer keeps track of a project's ongoing performance by evaluating documentation rather than by personally interacting with the evolving software and the development team. The problem with this approach is that a project's real value can only be judged by testing and using the actual software. By the time this is possible in a Waterfall project, it's too late. The results are projects that may have checked off all of the boxes in the requirements document but fall very short on delivering exceptional software.

About the same time organizations started optimizing the phase-based methodology, individual software developers began to experiment with the agile approach to project methodology. Instead of the myths of phase-based methodology, these pioneering coders developed methods that were based on the reality they work in, as seen in figure 2 below.

Instead of a phased approach that follows a master plan for an entire release, agile creates software in a series of short, self-contained iterations that deliver working and tested code. Iterations are generally between one and four weeks in length.

Instead of limiting customer contact to the beginning and end of a project, the customer is an integral and active part of the project team.

At the beginning of an agile project, the customer works with the developers to log an initial list of requirements, which are then broken into discrete segments called "stories." Developers make rough estimates of the effort required to deliver each capability. Based on these relative priorities and resource requirements, a working release plan is created. Unlike phase-based methodology, the project plan is considered adaptable and subject to change based on the customer's evolving priorities. Release dates may be based upon either particular sets of functionality or external events such as a trade show, demonstration or field test.

At the start of each iteration, the customer selects which stories are to be implemented based upon current priorities and resources. The customer may choose from the initial set or provide new stories. Once an iteration is underway, the stories selected may not be changed until the next iteration. Validation tests are created for each story to ensure that they're implemented correctly in the code. Agile utilizes test-driven development. Tests are written for each capability before actual coding begins. Developers don't work in isolation but communicate with others in the team, including the customer, during scheduled as well as ad hoc meetings.

At the end of each iteration, the customer reviews the new capabilities and then selects which stories the development team will work on during the next iteration. This creates a cycle that allows the team to learn from each iteration, apply the new knowledge to the next iteration, and create an upward spiral of evolving quality and positive refinement.

If Waterfall is the bureaucrats' attempt to control the geek rabble, agile is a grass-roots revolution by the people who actually build software. But the men in the suits are starting to catch on. A recent report stated that 41 percent of organizations surveyed have adopted an agile methodology. The Standish Group lists the agile process as one of the top 10 reasons for software project success.

Although the practices described previously are designed specifically for software development, practically any quality improvement practice can benefit from agile's universal insights:

•Because change is inevitable, processes should be equipped to leverage it for competitive advantage.

•Quality evolves most effectively through an ongoing cycle that converts knowledge into action and action into knowledge. Processes should be put into place that foster ongoing consideration of work performed and new action based on insights gained.

•Written documentation and statistical analysis is no substitute for person-to-person communication. An organization's vital tacit knowledge will best emerge through processes that foster ongoing dialogue.

• Communication shouldn't be constrained by traditional organizational boundaries but extend to connect all stakeholders. Insights that emerge from cross-discipline communication are uniquely valuable. When groups are geographically separated, computer-mediated collaboration tools can support this process.

•The best way for a project to meet a customer's real needs is through an iterative process that includes the customer as an active member of the project team.

•Test early, often and as granularly as possible. Processes should exist that facilitate ongoing tests of both individual components and the emerging integrated product.

The goal of an agile project isn't simply to meet initial requirements. Whether designing a new car or building a better mousetrap, a project utilizing an agile approach creates an ever-increasing level of clarity that facilitates continuous improvement and innovation. Agile projects don't just meet initial expectations. Instead, agile is designed to deliver results that are better than could be originally imagined.

David Elfanbaum is co-founder and vice president of Asynchrony Solutions Inc. (www.asolutions.com). Asynchrony Solutions is an innovative software technology firm with a growing line of products and services focused on agile application development, systems integration, collaboration and knowledge management. Clients include Global 2000 companies and government agencies.

For more information about agile methodology, a good place to start is the Agile Alliance, a nonprofit organization that supports individuals and organizations who use agile approaches to develop software (www.agilealliance.org).

|