Kainops invius, lateral and ventral. Credit: Moussa Direct Ltd. archive

Five hundred million years ago, the oceans teemed with trillions of trilobites—creatures that were distant cousins of horseshoe crabs. All trilobites had a wide range of vision, thanks to compound eyes—single eyes composed of tens to thousands of tiny independent units, each with their own cornea, lens, and light-sensitive cells. But one group, Dalmanitina socialis, was exceptionally farsighted. Their bifocal eyes, each mounted on stalks and composed of two lenses that bent light at different angles, enabled these sea creatures to simultaneously view prey floating nearby as well as distant enemies approaching from more than a kilometer away.

|

ADVERTISEMENT |

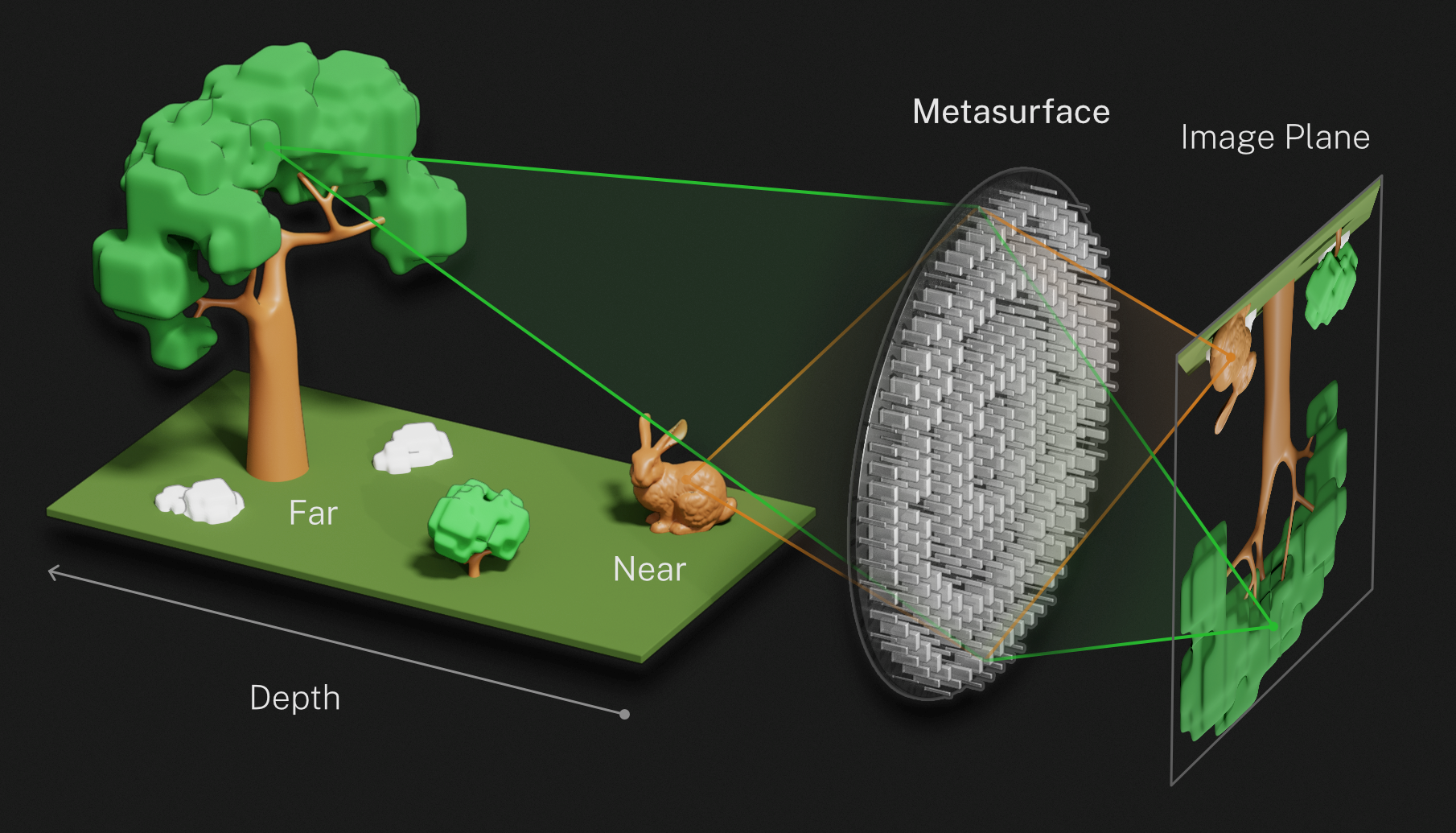

Inspired by the eyes of D. socialis, researchers at the National Institute of Standards and Technology (NIST) have developed a miniature camera featuring a bifocal lens with a record-setting depth of field (the distance over which the camera can produce sharp images in a single photo). The camera can simultaneously image objects as close as 3 cm and as far away as 1.7 km. A computer algorithm corrects for aberrations, sharpens objects at intermediate distances between these near and far focal lengths, and generates a final all-in-focus image covering this enormous depth of field.

Inspired by the compound eyes of Dalmanitina socialis, a species of trilobite, researchers at NIST developed a metalens that can simultaneously image objects both near and far. This illustration shows the lens structure of this extinct trilobite. Credit: NIST.

Such lightweight, large-depth-of-field cameras, which integrate photonic technology at the nanometer scale with software-driven photography, promise to revolutionize future high-resolution imaging systems. In particular, the cameras would greatly boost the capacity to produce highly detailed images of cityscapes, groups of organisms that occupy a large field of view, and other photographic applications in which both near and far objects must be brought into sharp focus.

NIST researchers Amit Agrawal and Henri Lezec, along with their colleagues from the University of Maryland in College Park and Nanjing University, describe their work online in the April 19 issue of Nature Communications.

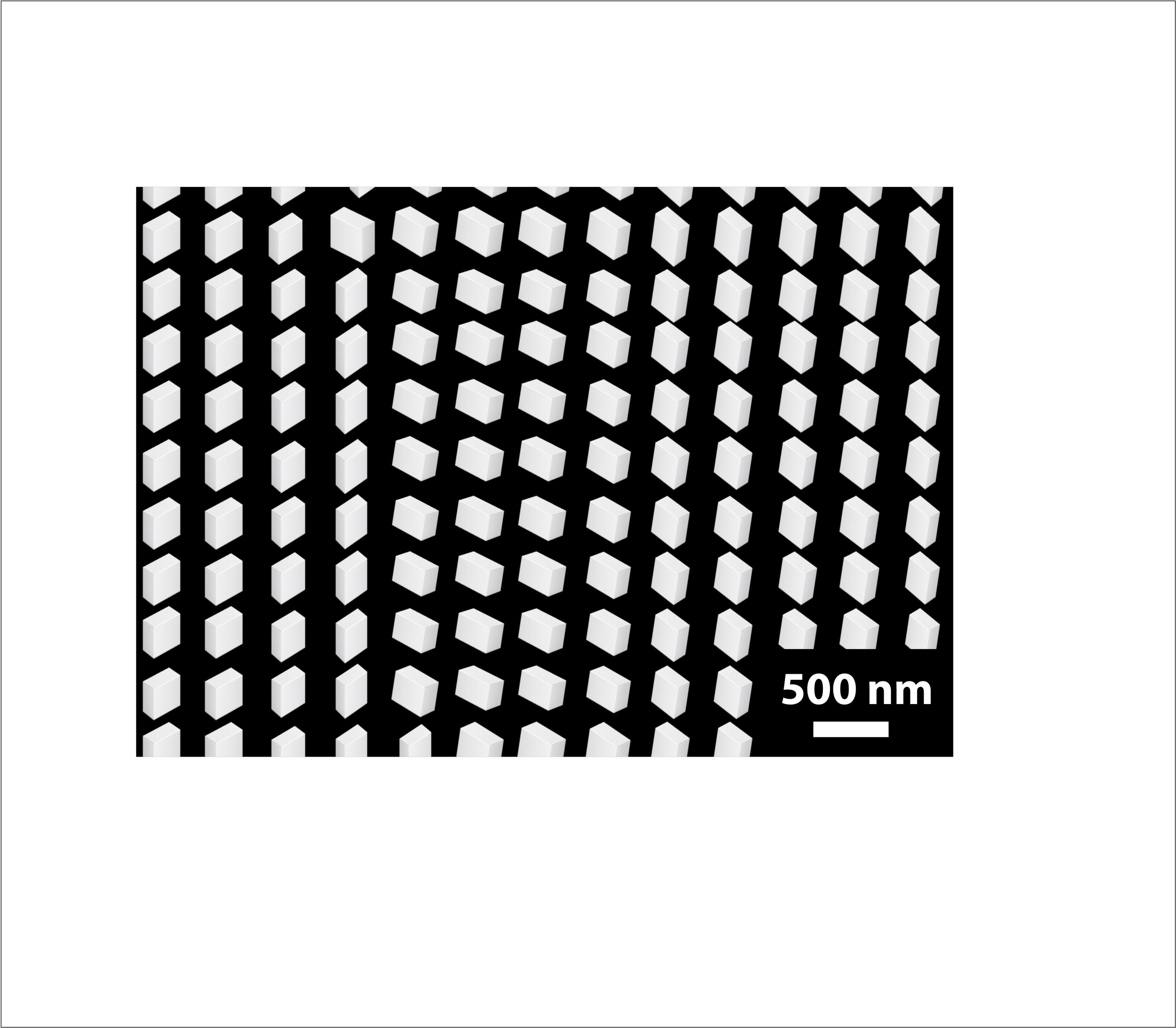

Scanning electron microscope image of the titanium oxide nanopillars that make up the metalens. The scale is 500 nanometers (nm). Credit: NIST.

The researchers fabricated an array of tiny lenses known as metalenses. These ultrathin films are etched or imprinted with groupings of nanoscale pillars tailored to manipulate light in specific ways. To design their metalenses, Agrawal and his colleagues studded a flat surface of glass with millions of tiny, rectangular, nanometer-scale pillars. The shape and orientation of the constituent nanopillars focused light in such a way that the metasurface simultaneously acted as a macro lens (for close-up objects) and a telephoto lens (for distant ones).

Specifically, the nanopillars captured light from a scene of interest that can be divided into two equal parts—light that is left-circularly polarized and right-circularly polarized. (Polarization refers to the direction of the electric field of a light wave; left-circularly polarized light has an electric field that rotates counterclockwise, while right-circularly polarized light has an electric field that rotates clockwise.)

The nanopillars bent the left- and right-circularly polarized light by different amounts, depending on the orientation of the nanopillars. The team arranged the nanopillars, which were rectangular, so that some of the incoming light had to travel through the longer part of the rectangle and some through the shorter part. In the longer path, light had to pass through more material and therefore bent more. For the shorter path, the light had less material to travel though, and therefore there was less bending.

Illustration of how the metalens modeled on the compound lens of a trilobite simultaneously focuses object both near (rabbit) and far (tree). Credit: S. Kelley/NIST.

Light that is bent by different amounts is brought to a different focus. The greater the bending, the more closely the light is focused. In this way, depending on whether light traveled through the longer or shorter part of the rectangular nanopillars, the metalens produces images of objects both distant (1.7 km away) and near (a few centimeters).

Without further processing, however, objects at intermediate distances (several meters from the camera) would be left unfocused. Agrawal and his colleagues used a neural network—a computer algorithm that mimics the human nervous system—to teach software to recognize and correct for defects such as blurriness and color aberration in the objects that resided midway between the near and far focus of the metalens. The team tested its camera by placing objects of various colors, shapes, and sizes at different distances in a scene of interest, then applying software correction to generate a final image that was focused and free of aberrations over the entire kilometer range of depth of field.

The metalenses developed by the team boost light-gathering ability without sacrificing image resolution. In addition, because the system automatically corrects for aberrations, it has a high tolerance for error, enabling researchers to use simple, easy-to-fabricate designs for the miniature lenses, Agrawal says.

Updated April 21, 2022, on NIST News.

First published April 19, 2022, on Nature Communications.

Paper: Qingbin Fan, Weizhu Xu, Xuemei Hu, Wenqi Zhu, Tao Yue, Cheng Zhang, Feng Yan, Lu Chen, Henri J. Lezec, Yanqing Lu, Amit Agrawal, and Ting Xu. “Trilobite-inspired neural nanophotonic light-field camera with extreme depth-of-field,” April 19, 2022.

Add new comment